Solve Your IT Equipment Needs with Equipment Financing

Aspen Systems partners with Fidelity Capital, LLC offering financing options for all of your equipment purchases. When you choose to lease our high performance computing solutions, you choose to conserve cash flow, guard against obsolescence and provide tax savings for your company. Fidelity’s financing programs can qualify your company within minutes and utilizes a streamline application process.

Industry-Leading Finance Solutions

Financing programs allow our customers to take advantage of 100% financing for all equipment and software, including shipping, installation, and training.

Finance Aspen Systems Product Offerings

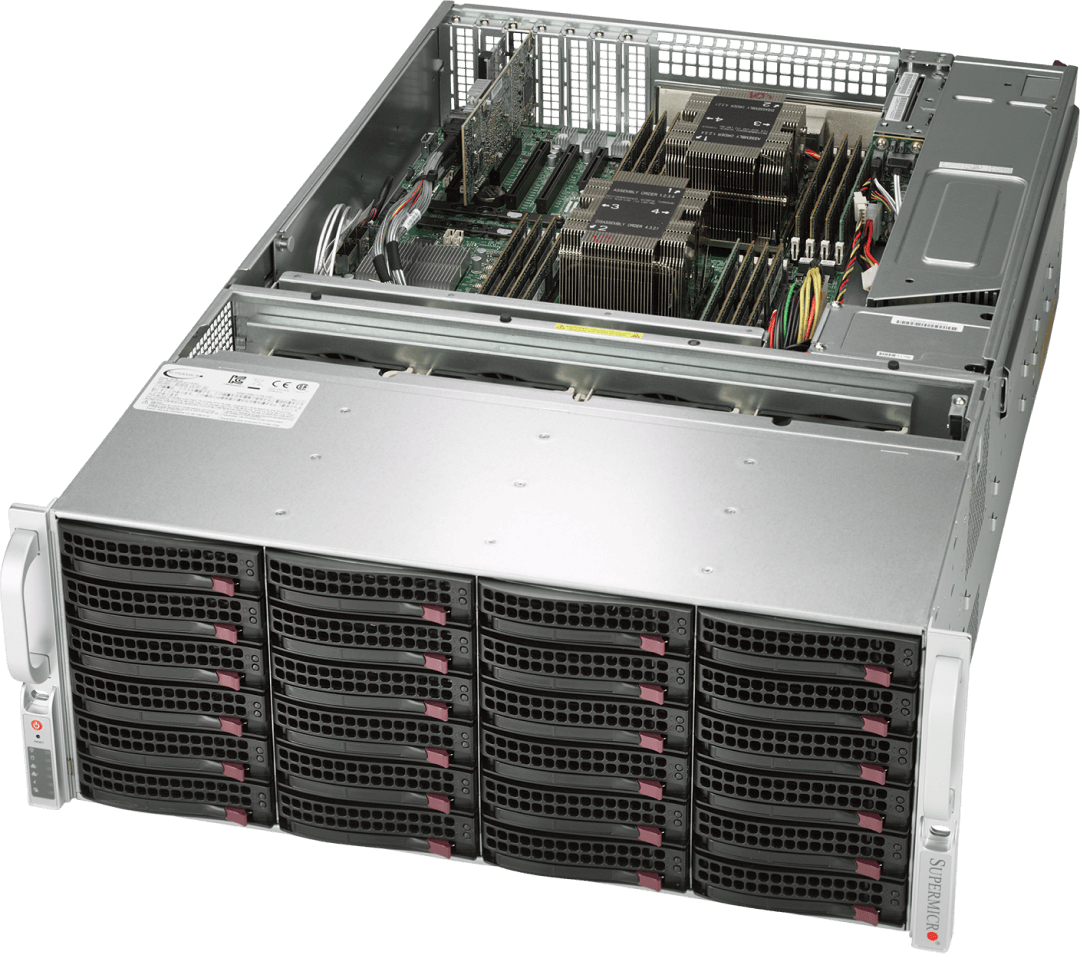

- Servers & Storage Solutions

- High-Performance Computing (HPC) Solutions

- Storage / File Systems

- On-Site Installation/Training

All applications can be faxed to 303.431.7196 or emailed to sales@aspsys.com

Cooling

Cooling